HPT 1.5 Edge: The Best Open-Source 4B Multimodal LLM for Edge and Mobile DevicesJune 6th, 2024 - HyperGAI Team

Overview

We are excited to announce the release of HPT 1.5 Edge, our advanced 4.2B multimodal LLM for edge devices. Edge pushes the boundaries of efficiency without sacrificing the performance. Despite its size, Edge maintains an impressive performance across a wide range of benchmarks, even surpassing several leading proprietary models. In the following, we highlight key features of Edge:

- Impressive results at an affordable cost. Edge is extremely efficient, yet, it provides competitive performances in many scenarios.

- Transparency. We continue to advance our contribution to the open-source community by releasing the HPT 1.5 Edge model under the Apache 2.0 license.

Model Details

HPT 1.5 Edge belongs to the models for edge devices category, which has less than 5B parameters. Although Edge follows the same design principle as its predecessors, Edge is trained with an improved data mixture and an advanced optimization process, which can deliver an impressive performance while being efficient for edge device usages. For the components, HPT 1.5 Edge uses a pre-trained SIGLIP (siglip-so400m-patch14-384), a pre-trained Phi-3-mini-128k-instruct, and our proposed H-Former to connect the ViT and LLM.

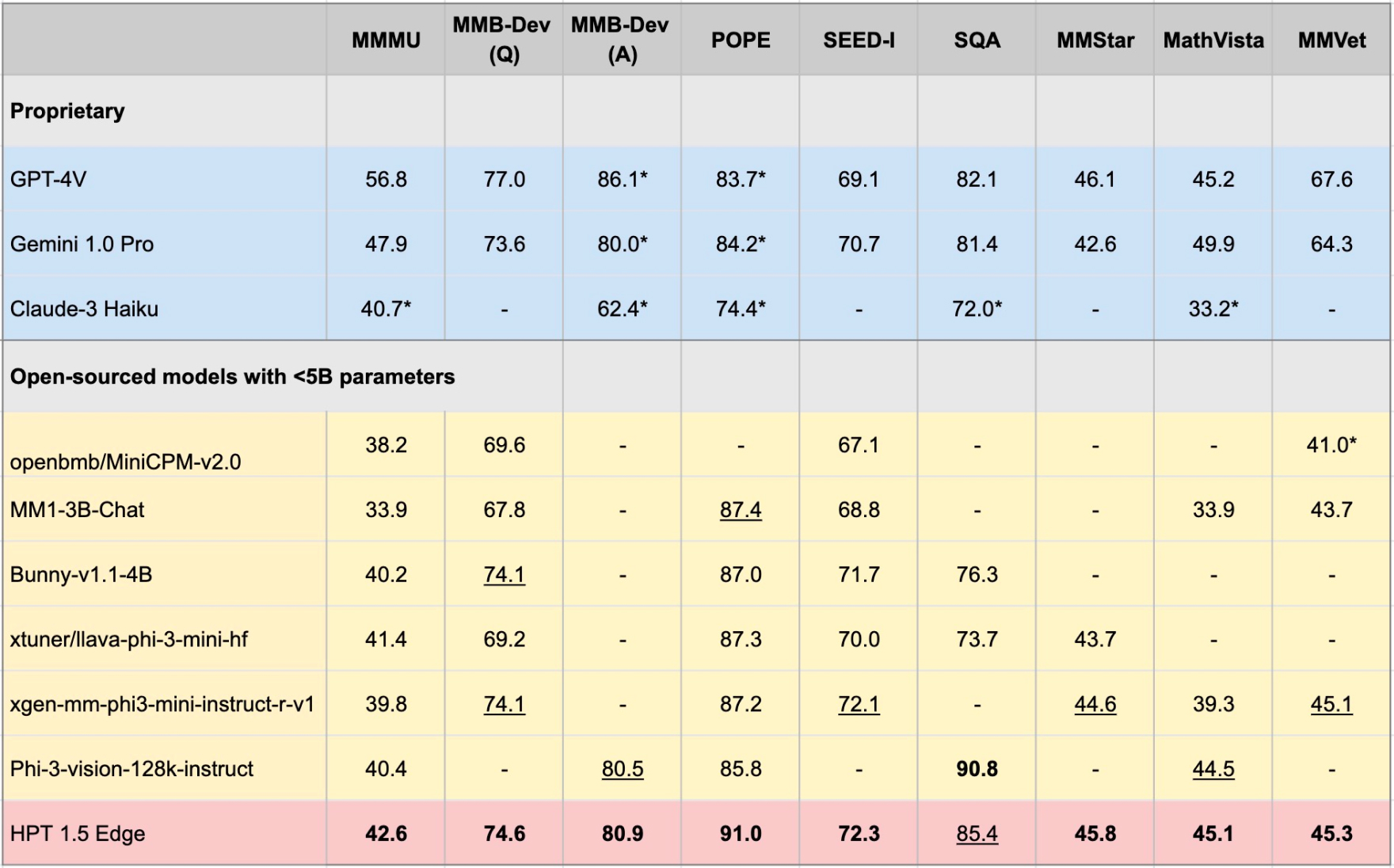

Benchmark Performance

To further understand Edge capabilities, we compare its performance with leading competitors, many of which are proprietary or having more parameters.

The majority of the results presented are taken from the models‘ original reports while the others are from Phi-3-vision evaluations, which we mark with an asterisk (*). We only compare models within the open-sourced category, bolding the best and underlying the second best results.

For MMB, we report the english version‘s dev set results at the standard question (Q) level and answer (A) level, which was used in Phi-3-vision-128k-instruct. In both evaluation strategies, Edge outperformed the open-source baselines considered. For the remaining benchmarks, we also observe strong performances from Edge, achieving the best results on POPE, SEED-I, MMStar, MathVista, and MMVet. It is also worth noting that Edge reached or surpassed GPT-4V and Gemini 1.0 on these benchmarks, except on MMVet. The only exceptions are Phi-3-vision-128k-instruct outperformed Edge on SQA; GPT-4V and Gemini 1.0 Pro outperformed Edge on MMVet. Such benchmarks present promising venues to further improve our Edge‘s capabilities in the future, which we will focus on in later releases. Lastly, we also wish to highlight that although starting from the pre-trained ViT and LLM, out HPT 1.5 Edge outperformed Phi-3-vision-128k-instruct on seven out of eight benchmarks considered.

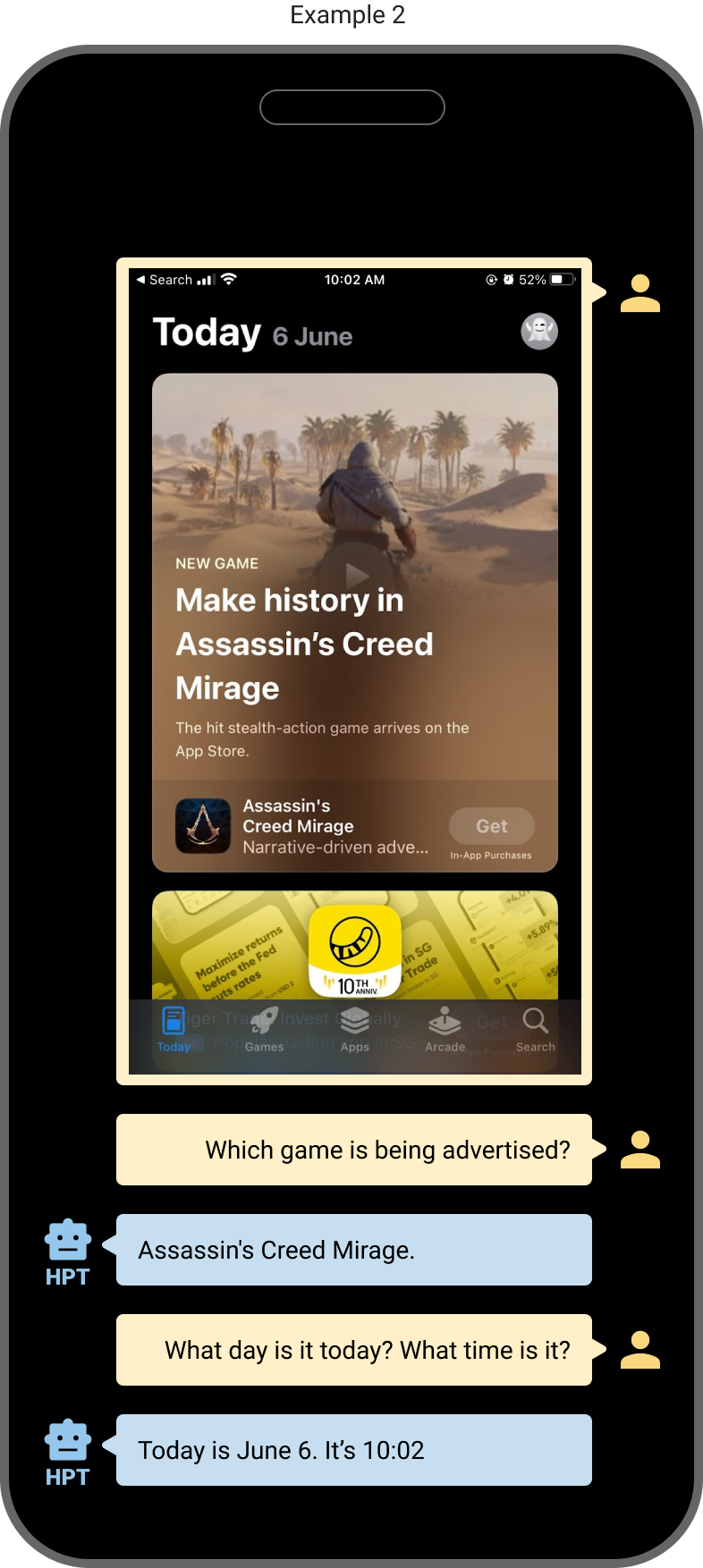

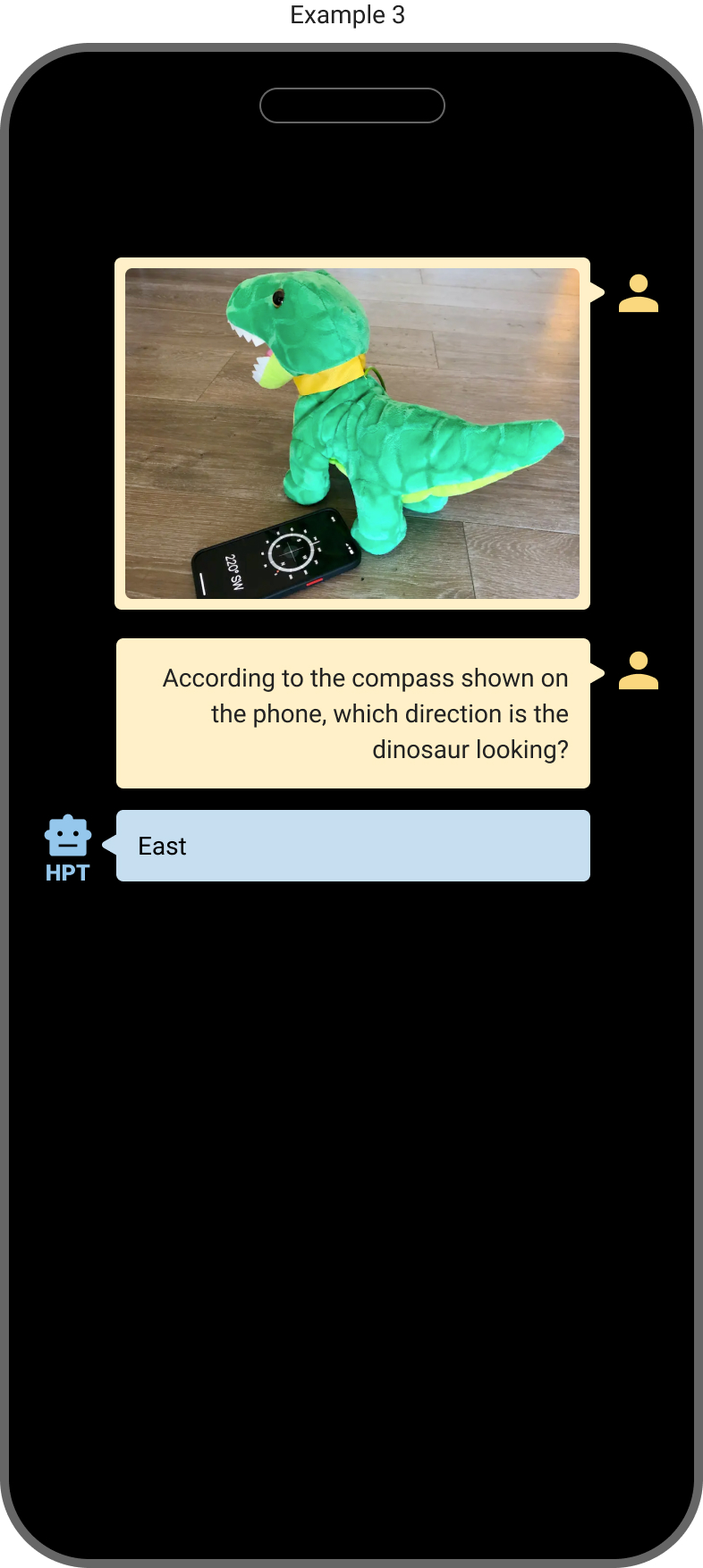

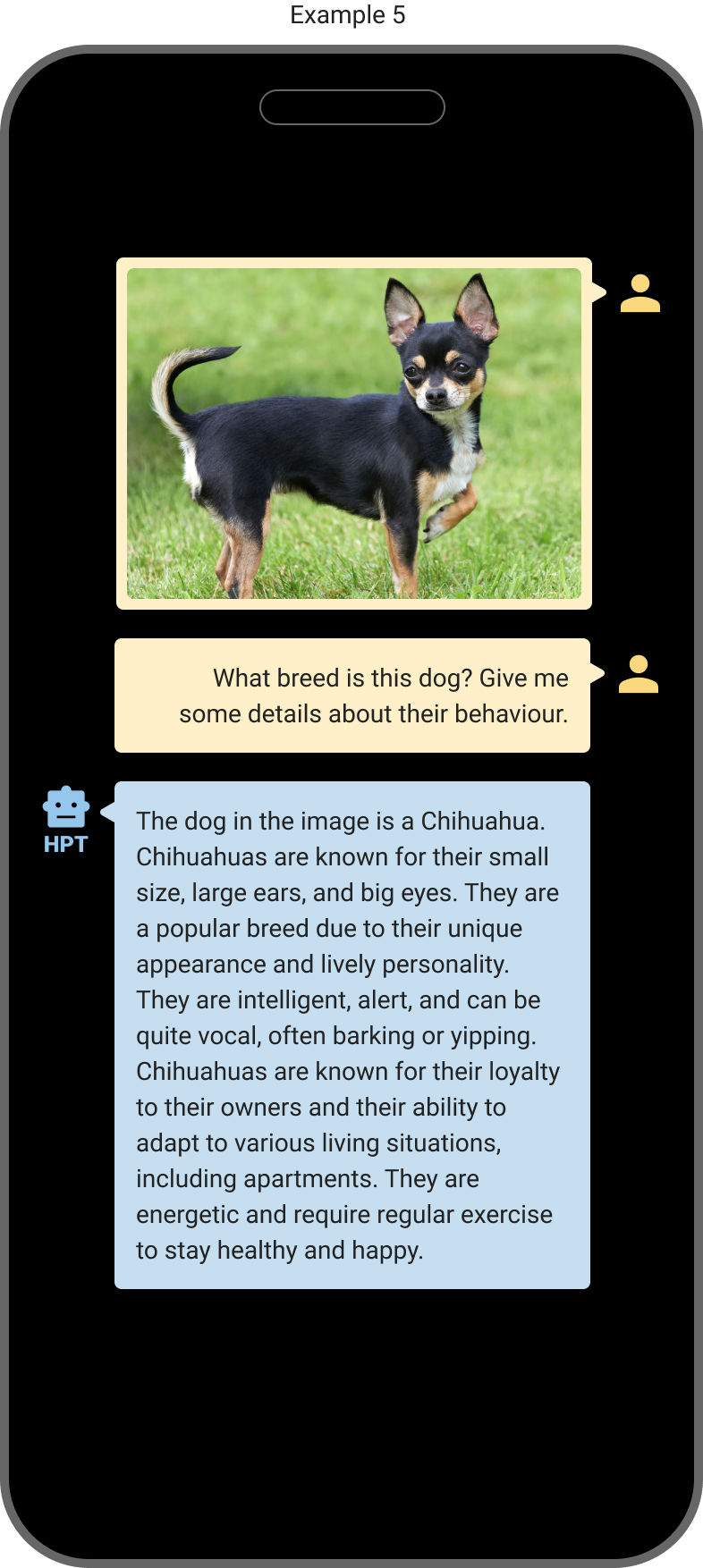

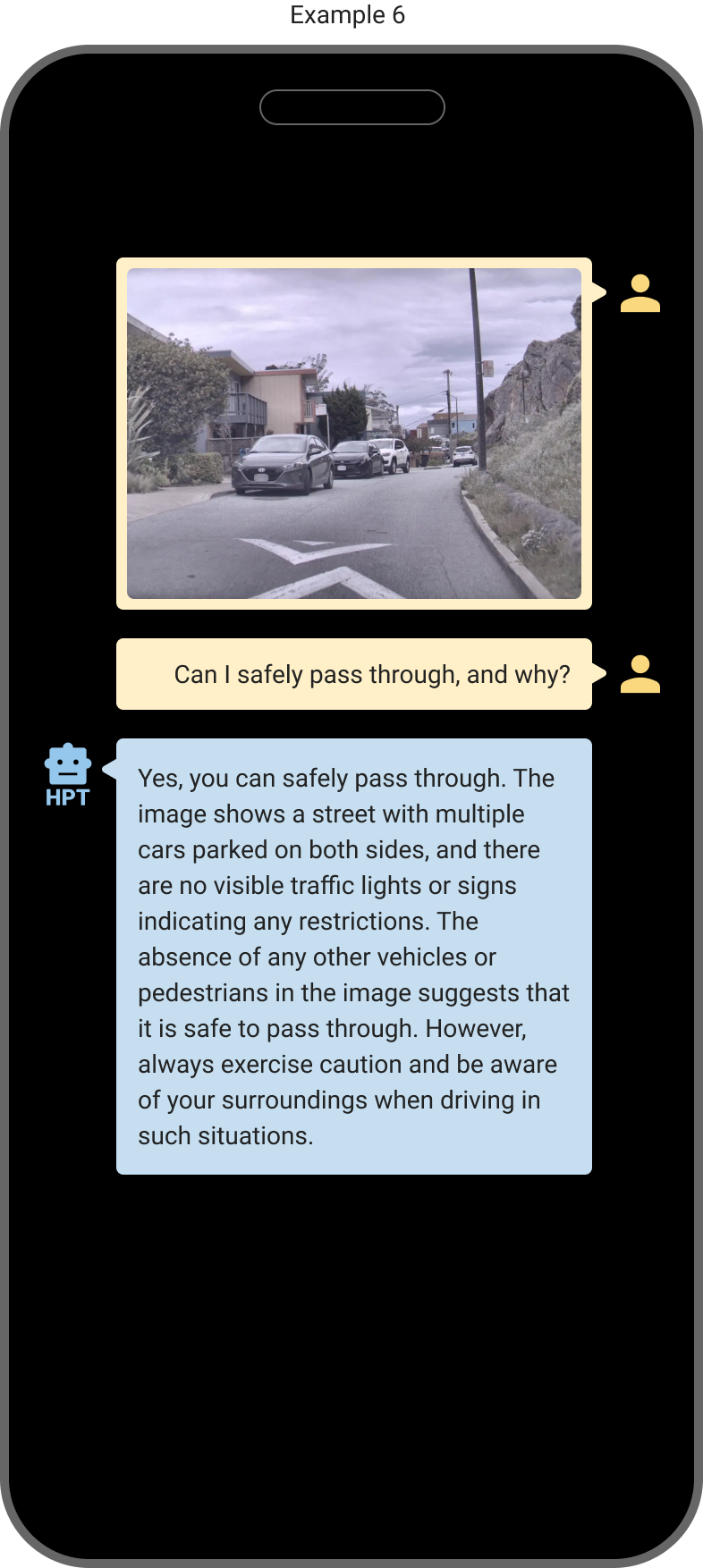

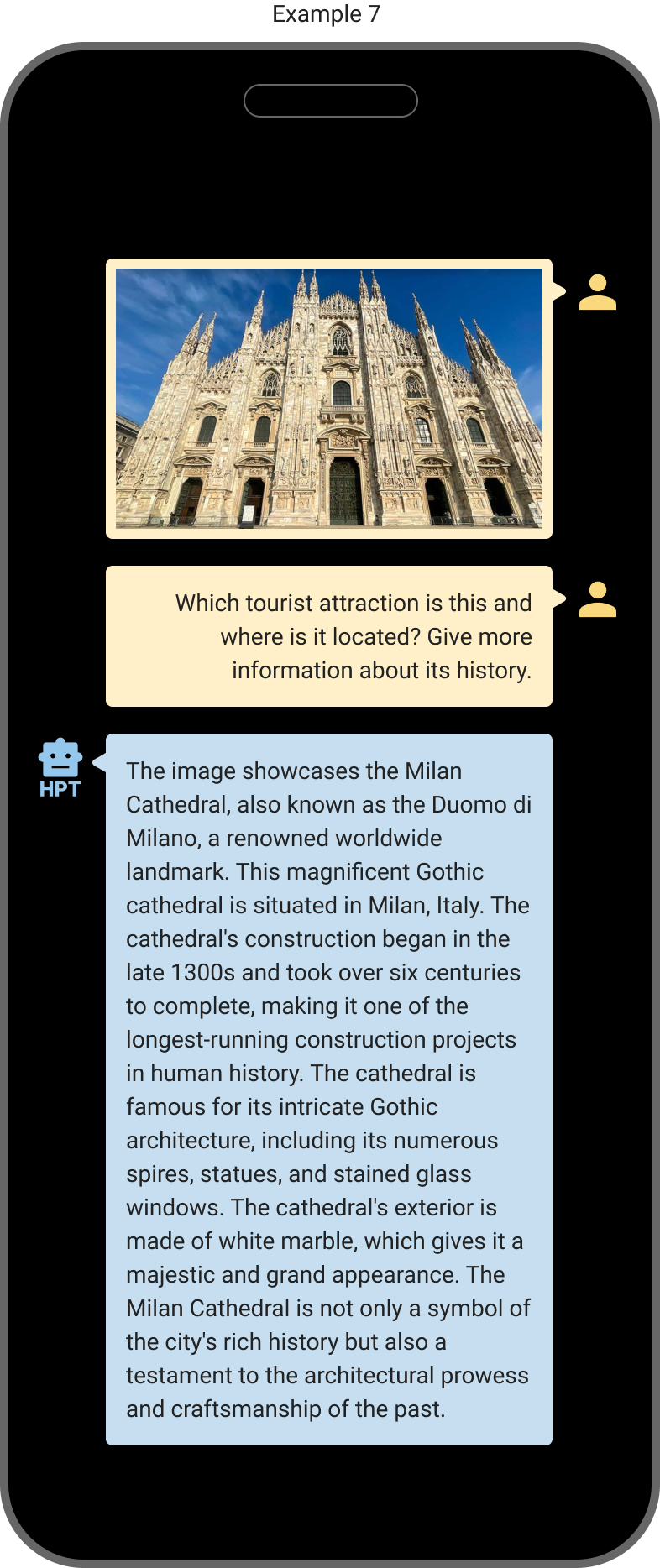

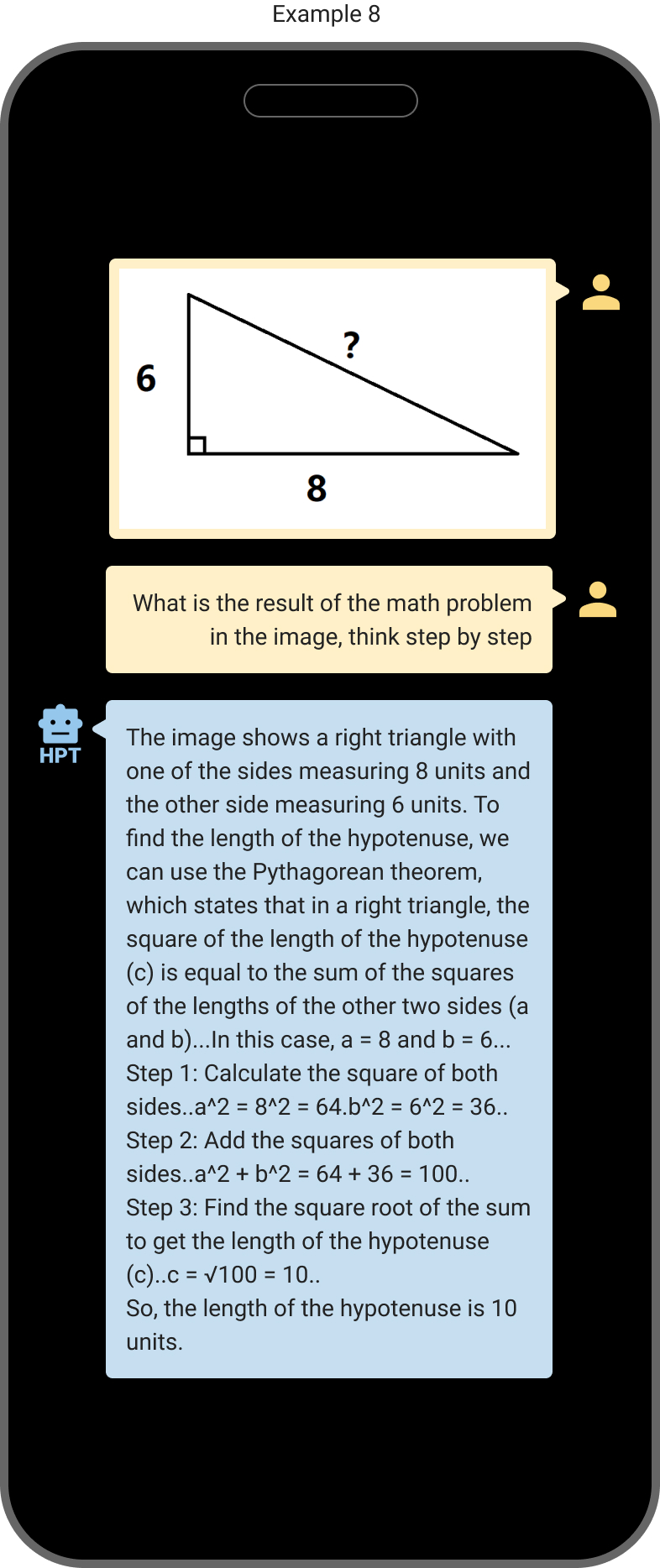

Examples

The Bottom Line

With the open-source release of HPT 1.5 Edge, we aim to bring high-quality cost-efficient multimodal LLMs towards the applications in edge and mobile devices for a wider range of audiences at a much more affordable cost. With only 4.2B parameters, HPT 1.5 Edge can be efficiently used or fine tuned on many consumer GPUs on edge and mobile devices. Together with the strong performance and impressive capabilities, we look forward to the adoption of our HPT models for a wide range of Multimodal GenAI applications on the edge and mobile devices.

How to Access HPT

Open-source release of HPT 1.5 Edge

- Github repo: https://github.com/hyperGAI/HPT

- HuggingFace: https://huggingface.co/HyperGAI/HPT1_5-Edge

Explore More

- Contact: Research at hpt@hypergai.com or Business at info@hypergai.com

- Follow us on: LinkedIn, X